Technical Area | Articles

The place to solve all your BIM doubts

The problem of interoperability in the E&C industry

Lean & Industrialized construction Operation

WHEN TALKING ABOUT INTEROPERABILITY IN THE E&C INDUSTRY, USING DIGITAL MEDIA, WE IMMEDIATELY THINK OF 'FILE EXCHANGE'. BUT DOES INTEROPERABILITY ONLY CONCERN DIGITAL FORMATS OF INFORMATION?

Interoperable

According to Wikipedia, Interoperability is the ability of a system (computerized or not) to communicate transparently (or as close to it as possible) with another system (similar or not).

When we say that a model is interoperable, we are saying that it has information that can be accessed by systems other than its author's, or prepared in a proprietary format.

Unless the entire information workflow is restricted to the set of applications that work with the same proprietary format. In this case, it is necessary to guarantee that all the information flow goes through actors of the same domain, be it a company, an element of the productive chain, or that this format has been universally adopted by the market.

At this moment, I write this article in Microsoft Word, and more importantly, I keep it in this format, even knowing that I will need to have my subscription up to date to access this information. I don't see such a problem, after all, Windows is the most popular operating system on the planet, and because of that, even other systems worry about maintaining a certain compatibility with it, which ensures that I can migrate to another operating system without losing my precious articles. But does this apply to an industry as fragmented as E&C? Can an organisation trust all its data to a proprietary format, on the promise that this format will always be available and accessible, not only because of the subscriptions, but also because of the compatibility between the different versions released periodically, and many that do not even make any significant difference?

API

The use of API (Application Programming Interface), a set of routines and standards that allows the interface between applications, is a way to ensure interoperability of information. But even in this process, open and universal standardisation is important. When we talk about the use of APIs in the communication of different and independent systems, the data transmission protocol is usually standardised, and the format is also standardised. This guarantees that any developer, be it a company or a professional providing a service, can consume information in a standardised way if duly authorised.

There are different ways of consuming information through an API. Systems usually use what we call API REST to grant information between applications using a specific architecture (REST), usually through the http or https protocol, widely used in internet connections. There are several of these public APIs, where any application can consume information such as weather, maps, public government data, etc. A system can create a REST API to allow other systems to consume information, through an authorisation protocol, and thus guarantee interoperability between applications.

Another form of interoperability is programmable APIs. A developer creates a library in a specific language to grant access to data in their proprietary format in an algorithmic way. This allows other developers to create add-ons, known as plugins or add-ons. Thus, a developer of a specific application can create an add-on that exports the model information to a specific proprietary format of this other application and thus maintain interoperability between two applications. The big problem with this process is to guarantee communication only between these two applications, without using an open and universal standard. It is like an agreement between two parties only.

Imagine if Morse code, widely used for communication as a system of representation of letters through coded signal, had a different standard for each country that used it. We would have more than a hundred standards being used through the same equipment and form of communication. Why then not use an open and universal standard?

Data semantics

For data to produce information they need to have meaning within a specific context. Imagine a 'door'. This 'door' can have many meanings depending on the context. It can represent a simple component within a materials context, a supply and installation service within the site measurement context and also a building element with the function of allowing passage between different environments or preventing the spread of fire in the event of a fire in the functional context. Interoperability is not restricted to just an exchange of files, but to an exchange of data that produces information in different contexts in a way that does not lose its meaning

The importance of having an interoperability standard, not only concerned with the file format, but with the semantics of the data, led the industry to create an open and international standard, standardized by ISO.

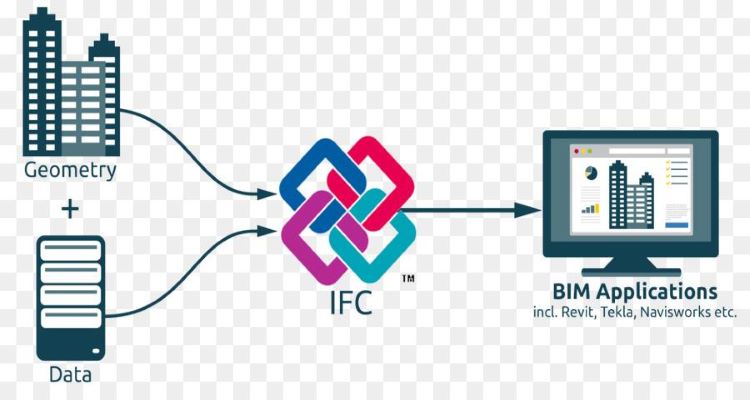

IFC

IFC (Industry Foundation Classes) is a standard that aims to describe data and elements of an E&C industry enterprise throughout its life cycle. It is based on an object-oriented data modelling method. This means that real-world elements are digitally described as objects with attributes, and not only the objects, but the relationships between them, in a way that preserves their meanings, for the entire industry production chain, throughout the life cycle. This means that the door will be modelled in this file and its data should produce information for all the different contexts, from design, through construction to the management of its maintenance.

The fact that the construction industry is so fragmented, forces different participants in different phases to use different applications. Applications for structural analysis, project modelling, site management, budgeting, asset management, etc. All sharing the same data and in an integrated way. It seems impossible that all these actors will use the same application with the same proprietary format. And even if they do, is it safe to keep all this information in a proprietary format?

There is a misconception that the IFC standard is flawed and does not serve the users. Actually, the standard is quite mature, it has a lot to improve, but it meets all the requirements of the whole life cycle of a construction. What happens is that there are few softwares that export and convert information in a standardized way according to the schema. There is a correct place for each element, a class for each object, and what you see is that applications either don't allow the proper configuration or simply mess up the data inside the format and don't follow the standard properly.

Open standard interoperability

Brazil still walks slowly in the adoption of BIM, but remembering that BIM is about technology, processes and policies, believing that we will be able to reach high levels of maturity with proprietary formats, communicating only through API, is a little utopian. The adoption of an open and certified standard becomes important from the first maturity levels of BIM adoption, as the only way to ensure that more than 200 certified applications can consume information correctly, without data loss, transparently, and with quality.

Learning about the IFC standard ensures not only a good understanding of how information should be exchanged between applications, but also an in-depth, practical understanding of why data is distributed in a certain way, taking into account how it will be used and by whom.

Author: Carlos Dias, BIM Specialist - head of the Construction Technology sector of CTCEA - Brazilian Org. for the Scientific and Technological Development of Airspace Control.

Parametric design with Visual Programming in BIM course at Zigurat.

Source: https://www.e-zigurat.com/blog/pt-br/problema-da-interoperabilidade-industria-ec/